Why Your AI Gives Inconsistent Answers (And It Is Not What You Think)

You ran the same query twice. Same question. Same customer. Different answer.

You probably blamed the AI. Or the model having a bad day. Or randomness.

Here is what is actually happening: the AI did not change. Your prompt did.

Not the words — the formatting. A space here. A newline there. A period instead of a colon. These seem like nothing. To the AI, they are everything.

What Tokenization Drift Actually Means

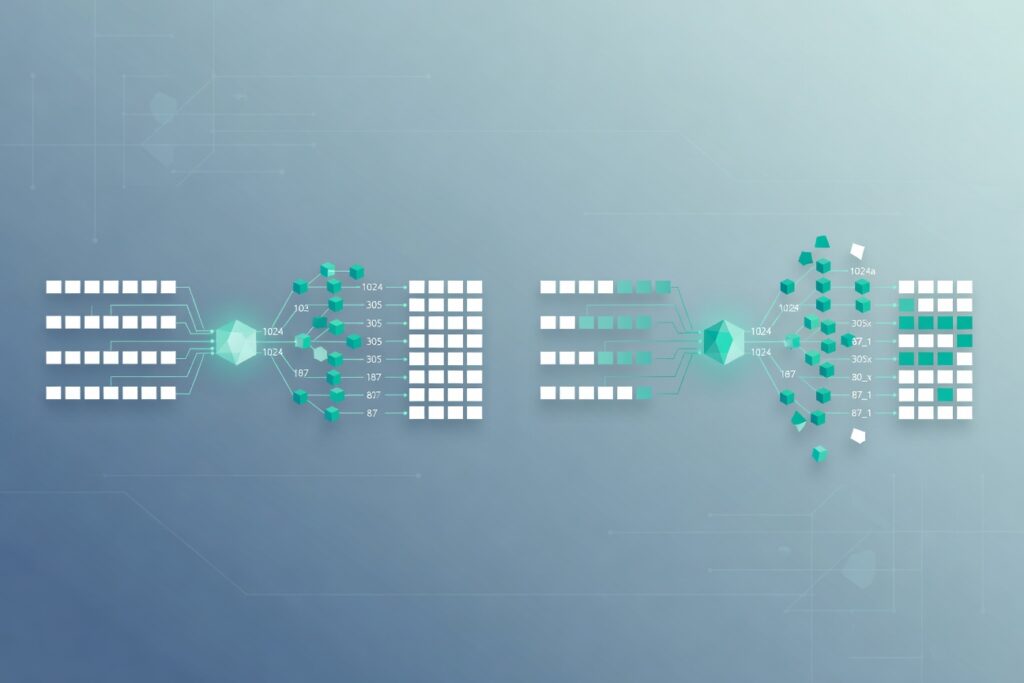

Before an AI model processes any text, it converts that text into numbers — token IDs. The same words, formatted differently, produce completely different token sequences.

Here is a real example from the GPT-2 tokenizer (the same scheme used by GPT-4, LLaMA, and Mistral):

The word “classify” with a leading space becomes token [36509]. Without the space, it becomes tokens [4871, 1958] — two tokens instead of one, completely different numbers.

To the model, ” classify” and “classify” are as different as “apple” and “orange.”

Now think about what happens when your AI customer service prompt has a leading space in one interaction and not in another. Or when one query ends with a period and the next ends with nothing. The model is not being inconsistent — it is receiving completely different inputs.

Why This Matters for Your Business

When you fine-tune an AI for your business — say, an AI receptionist that handles calls and books appointments — you are not just training it on tasks. You are training it on the specific format, structure, and phrasing of how those tasks are presented.

If your production prompts deviate from that trained format, you are sending the model inputs it was never optimized to handle. It will still try to answer. It just will not answer as well.

This is why many AI implementations work in demos and fail in production. The demo uses clean, consistent formatting. Production involves messy, variable inputs — different employees using slightly different phrasings, API calls arriving in different formats, real-world customer messages that do not match the training data.

The Fix Is Simpler Than You Think

You do not need a better model. You need consistent formatting.

Lock down your prompt templates. Use the exact same structure every time: same spacing, same separators, same capitalization patterns. Test your prompts the way you would test any other piece of business software — with consistency in mind.

If you are using an AI receptionist or any customer-facing AI system, the format of your prompts matters as much as the content. Do not let sloppy formatting undermine what your AI was trained to do.

If you are building or running AI-powered customer service — an AI receptionist that handles calls, checks your schedule, and books appointments automatically — the consistency of your prompts is not a technical detail. It is the difference between a system that works in demos and one that works every time a customer calls.